PixVerse V6 Review: New Features, Performance & Honest Verdict (2026)

PixVerse V6 officially launched on March 30, 2026, and it's already generating a lot of buzz. If you've ever been excited by a few stunning AI video clips only to get frustrated when trying to turn them into a coherent story: characters drifting, lighting jumping, no sound, and endless stitching, this update might finally offer some real relief.

With new emphasis on camera control, native audio synthesis, and a dedicated Multi-Shot engine, V6 is positioning itself as more than just another incremental upgrade. In this review, I'll break down what’s actually new in this guide, how it compares to V5.6 in real workflow terms, share practical prompting tips, and help you decide whether it's worth jumping on right after launch.

What Is PixVerse V6?

PixVerse V6 is the latest model from PixVerse, an AI video generation platform aimed at content creators, marketers, and independent filmmakers who need production-quality clips without a full editing team behind them.

Where earlier versions of PixVerse worked best for short stylized clips, effective as standalone social posts, V6 shifts toward what PixVerse describes as a "model-driven workflow." In practice, that means longer continuous output, more accurate physical simulation, and tools designed to slot into a real content pipeline rather than stop at the experiment stage.

The four headline upgrades: 15-second 1080p output in a single generation, built-in audio synthesis, a native multi-shot engine for scene transitions, and flexible output ratios for different platforms. If you want to learn how to make an AI video step by step, V6 is one of the more complete starting points available right now.

PixVerse V6 Key Features

The improvements in V6 aren't about making every single frame more beautiful, V5.6 could already do impressive visuals in short bursts. Instead, the focus is on reliability across time and basic storytelling needs.

15-Second 1080p Single-Pass Generation

This is the one that changes the day-to-day workflow the most. V6 can generate a full 15-second 1080p clip in a single pass, no stitching required. That might sound like a minor spec bump, but if you've ever tried to produce anything longer than 5 seconds in earlier AI video tools, you know the stitching process is where most of the time and frustration go.

Every time you joined two clips, something changed: the product texture would shift slightly, the character's proportions would drift, the lighting would flip for no clear reason. V6 eliminates that problem at the source. Lighting, texture, and subject features stay consistent from frame one to frame 450. For product content, short films, or any video that needs to look coherent, this is the upgrade that actually matters.

Multi-Shot Storytelling Engine

Getting multiple camera angles to look like they belong in the same video has always been one of the harder AI video challenges. Wide shot to close-up, exterior to interior in earlier tools, each shot needed its own generation, and matching them was a grind.

V6 handles this with a native multi-shot engine. The model tracks spatial relationships internally, so Shot 2 understands where Shot 1 left off, same environment, same lighting logic, same surface materials. It's not perfect for every scenario, but for structured sequences like ad spots or documentary cutaways, it removes a significant chunk of the iteration work.

Native Audio Synthesis

One of my favorite changes is the built-in audio generation. Instead of always receiving a silent video that needs separate sound design later, V6 can generate basic dialogue, lip-sync, and ambient sounds together with the visuals. For quick social media pieces or story drafts, this can cut down post-production time significantly. The audio quality isn't studio-level yet, but having it included from the beginning changes how you approach the entire creative process.

Flexible Multi-Resolution and Aspect Ratio Support

V6 lets you define the output ratio and resolution upfront, so the model composes the scene with the final platform in mind. This helps avoid awkward cropping and wasted space that often happens when you try to reformat footage after generation. It's a small but smart quality-of-life improvement for creators publishing across multiple platforms.

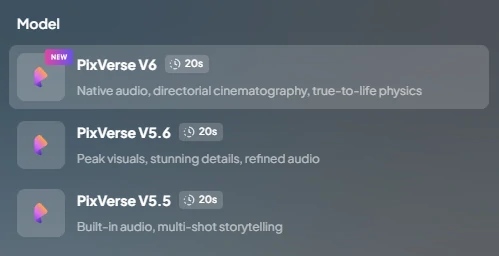

PixVerse V6 vs V5.6: What Actually Changed?

V5.6 was excellent for quick, stylized experiments, but many creators (including myself) often found themselves limited when trying to build anything beyond short standalone clips. V6 shifts the emphasis toward longer, more reliable output and better workflow integration.

Here's my honest side-by-side comparison across the dimensions that actually matter for daily content creation:

| Aspect | PixVerse V5.6 | PixVerse V6 | My Take as Reviewer |

| Best For | Short stylized clips & visual experiments | Coherent 15-second narrative & story-driven pieces | V6 finally feels like it can support real mini-stories instead of just pretty fragments |

| Continuity & Stability | Strong in 5-second bursts, often breaks beyond that | Improved across full 15 seconds with better temporal coherence | The biggest practical win — far less stitching and fixing needed |

| Multi-Shot Capability | Limited, required heavy prompt repetition | Dedicated Multi-Shot engine with better context memory | Makes simple scene transitions actually usable for the first time |

| Audio Handling | Silent output only, all sound added in post | Native audio with basic lip-sync and ambient sounds | Huge time-saver for social content and rough drafts |

| Workflow Practicality | Great for exploration, heavy editing afterward | Closer to production-ready assets with less post-work | V6 moves AI video from "toy" toward “tool I can actually rely on” |

In my experience, if your primary goal is creating highly artistic 5-second Reels or TikToks, V5.6 is still perfectly capable. However, if you want to produce short narrative sequences, brand videos, or any content that benefits from better consistency and less manual cleanup, V6 represents a clear upgrade in real-world usability.

PixVerse V6 in Action: 3 Scenarios That Show What It Can Do

PixVerse published three test scenarios alongside V6.

Scenario 1: Anime Character with Japanese Lip-Sync

A non-English dialogue scene with anime-style character features is a deliberately difficult starting point, it combines character consistency, multilingual audio, and emotional performance in one clip. Using it as a showcase test tells you PixVerse is confident in V6's ability to hold complex character details across the full 15-second window. Good news for creators working in animation, character content, or non-English markets

Scenario 2: High-Speed POV with Fisheye Distortion

Extreme lens distortion plus fast motion is a classic stress test for any video model, it's where weaker physical simulation shows up as smearing or structural collapse. The fact that PixVerse chose a fisheye POV as a benchmark suggests they're specifically signaling improved camera physics. For creators doing action content or anything with unconventional optics, this is the scenario worth paying attention to.

Scenario 3: Large-Scale Destruction with Competing Visual Elements

This one tests subject-background separation under maximum visual pressure: a large subject competing with debris, sparks, smoke, and dynamic lighting, all at once. It's the scenario most relevant to game trailer and action content creators, and it's the test that historically exposed how quickly AI models lose track of "what the audience is supposed to be looking at" when the scene gets busy.

For more detailed examples and official benchmark results, you can refer to PixVerse’s official V6 guide.

How to Use PixVerse V6: 5 Steps to Your First Video

Getting started with V6 doesn't need to be complicated. Here's a straightforward workflow that works well for most users:

1. Choose the Engine and Resolution

Enter Pixverse, and start by selecting V6 and setting the resolution to 1080p. Doing this early prevents generating at a lower quality and then needing to upscale later.

2. Set Your Output Parameters

Choose the aspect ratio that matches your target platform: 9:16 for vertical short-form, 16:9 for wider videos. Lock in the 15-second duration from the beginning.

3. Write a Clear, Literal Prompt

Focus on describing exactly what the camera sees and what should be heard. Specific details about positioning, lighting, movement, and sound usually produce more predictable and consistent results than vague emotional descriptions.

4. Enable Multi-Shot and Audio Options

For simple scene transitions, turn on Multi-Shot and keep key character and environment descriptions consistent across shots. Activate native audio if you want basic sound included in the generation.

5. Generate, Review, and Refine

Watch the full clip carefully for motion smoothness and consistency. Small tweaks to motion strength or prompt wording often lead to much better second attempts.

💡Pro tip: If you want to test V6 (and compare it quickly with other top models) without switching between different websites, platforms like SeaArt AI offer a clean browser-based interface that gives you access to multiple leading AI video models and tools in one place.

Prompting Tips That Make a Real Difference

The single biggest improvement in results comes from changing how you write prompts. Move away from creative writing style ("a magical and energetic forest scene") and toward clear, observable details ("wide tracking shot through pine trees with morning side light, a fox walking steadily from left to right, leaves rustling").

This “literal” approach gives the model concrete elements to work with instead of forcing it to guess at mood or atmosphere. Over time, you'll waste fewer credits and get usable outputs faster.

FAQs

Can I use videos generated with PixVerse V6 for commercial projects?

Yes, when using PixVerse V6 on SeaArt AI, generated videos are permitted for commercial use, including marketing, social media campaigns, and client work. However, always double-check the specific licensing terms on SeaArt or PixVerse, as rights can vary slightly by plan tier.

How well does character consistency hold up in Multi-Shot mode?

It performs noticeably better than previous versions on simple sequences, but very complex characters or rapid movements can still show some drift. Repeating key descriptive details across every shot helps maintain stability.

Is PixVerse V6 beginner-friendly?

Yes, the interface remains approachable, and the longer output length actually makes it easier for new users to see meaningful results without advanced editing skills.

Final Thoughts

Wrapping up this PixVerse V6 review, I have to say it's not a magical fix that replaces traditional video production overnight, but it's definitely one of the most practical steps forward I've seen in AI video this year.

For hobbyists and content creators who are tired of short experimental clips and want to create more structured short-form videos, PixVerse V6 feels like a real improvement in both reliability and workflow efficiency.

If you're exploring AI video models and want a convenient way to test multiple leading models (including PixVerse V6) in one interface, SeaArt AI makes it easy to access a variety of popular AI video generation tools directly in your browser. No complicated setup, just straightforward creation and experimentation.