Seedance 2.0 on SeaArt AI: Guide to the SOTA Video Model

Seedance 2.0 has been generating serious interest since ByteDance announced it. Multimodal input, multi-shot coherence, and built-in video editing put it in a different category from most AI video tools available in early 2026.

ByteDance's current SOTA video model has officially landed on SeaArt AI. Full capability, ready to use.

This page covers what it actually does, how to access it on SeaArt AI, and practical prompt structures that get results.

TL;DR

Seedance 2.0 is ByteDance's latest video generation model, now on SeaArt AI. You can upload up to 12 files (images, videos, audio), tag them in your prompt, and get a multi-shot video where the same character holds across every cut. Audio syncs too.

What's New in Seedance 2.0

Seedance 2.0 shifts how you control AI video generation. You can input any combination of text, images, videos, and audio. Specify what you need, and the model handles the rest.

| Feature | What it does |

|---|---|

| 📸 Reference images | Precisely restore composition, character details, and visual style |

| 🎥 Reference videos | Copy camera language, complex action choreography, and creative effects |

| 🔗 Seamless extension | Generate continuous shots following your prompts, not just "generate," but "keep shooting" |

| ✂️ Advanced editing | Replace characters, remove elements, or add new ones to existing videos |

How the reference system works:

Use the @filename syntax to assign specific roles to each uploaded file. For example: @image1 as the opening frame, @video1 for camera movement reference, @audio1 for background music. This direct linking gives you precise control over every element in your generated video.

Multi-Shot Sequences from One Prompt

Describe a narrative with timing blocks and the model generates connected shots across the full sequence. The character above goes through three distinct visual states (human soldier, partial cyborg, full mecha) across separate shots, yet the facial structure, outfit base, and body proportions stay locked. That's the character consistency system at work: ROPE positional encoding tracks identity across cuts, so you're not managing consistency manually between generations. The model holds it.

How to Extend Videos: Seamless Editing

Seedance 2.0 supports four editing modes: backward extension (continue the story), forward extension (add a prequel), plot rewriting (change the narrative), and local adjustments (modify specific elements). The example below shows forward extension, adding context before the main scene.

Original video: A man bumps into a woman on the street. She's angry, he apologizes. Simple 7-8 second interaction.

Forward extension prompt:

Combine with @video1 to add the opening scene: main character receives a phone call while walking on the street. Maintain consistent visual style.

The prequel adds narrative context. When combined with the original collision scene, you get a complete story arc showing motivation and consequence.

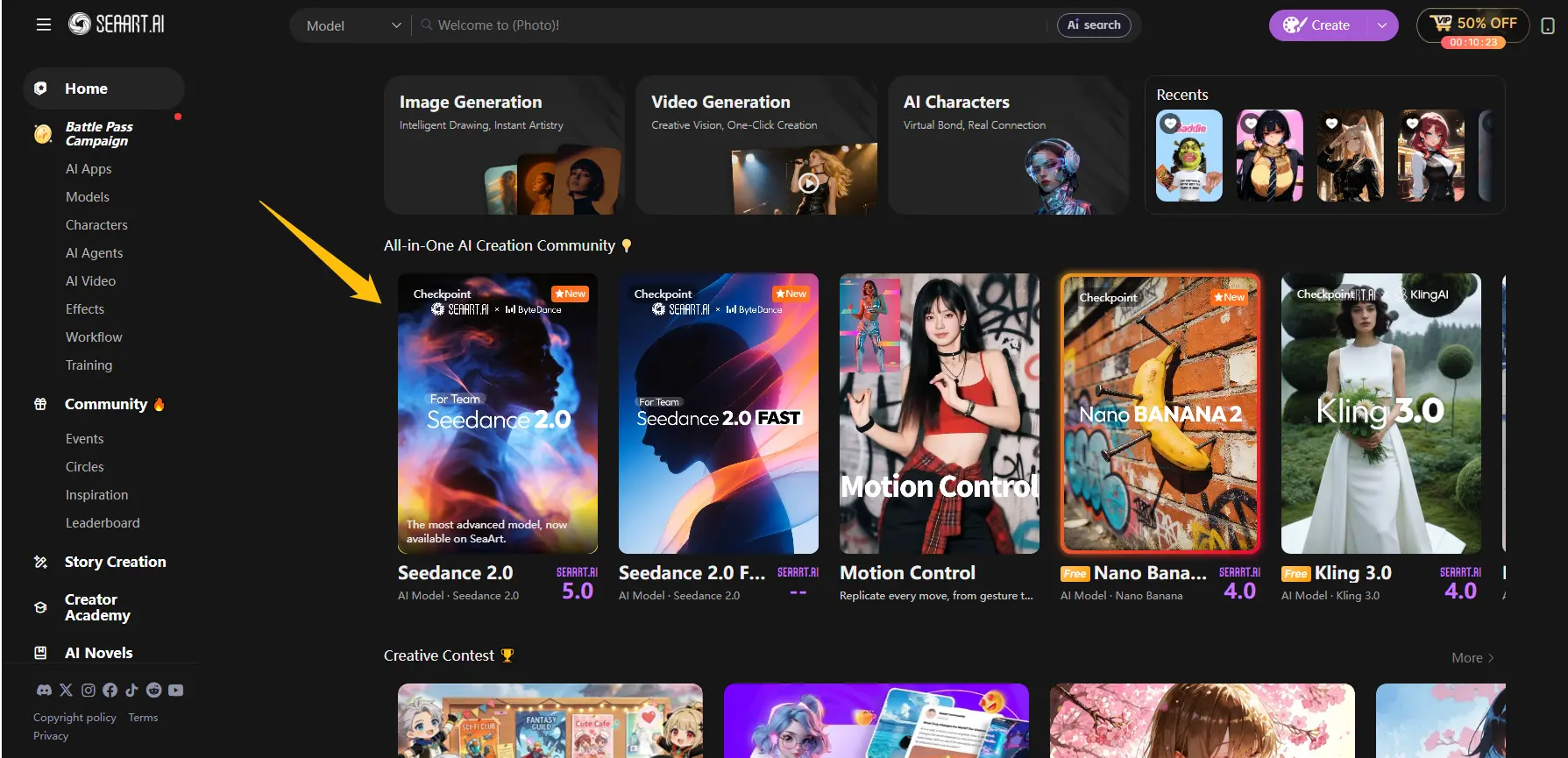

How to Use Seedance 2.0 on SeaArt AI

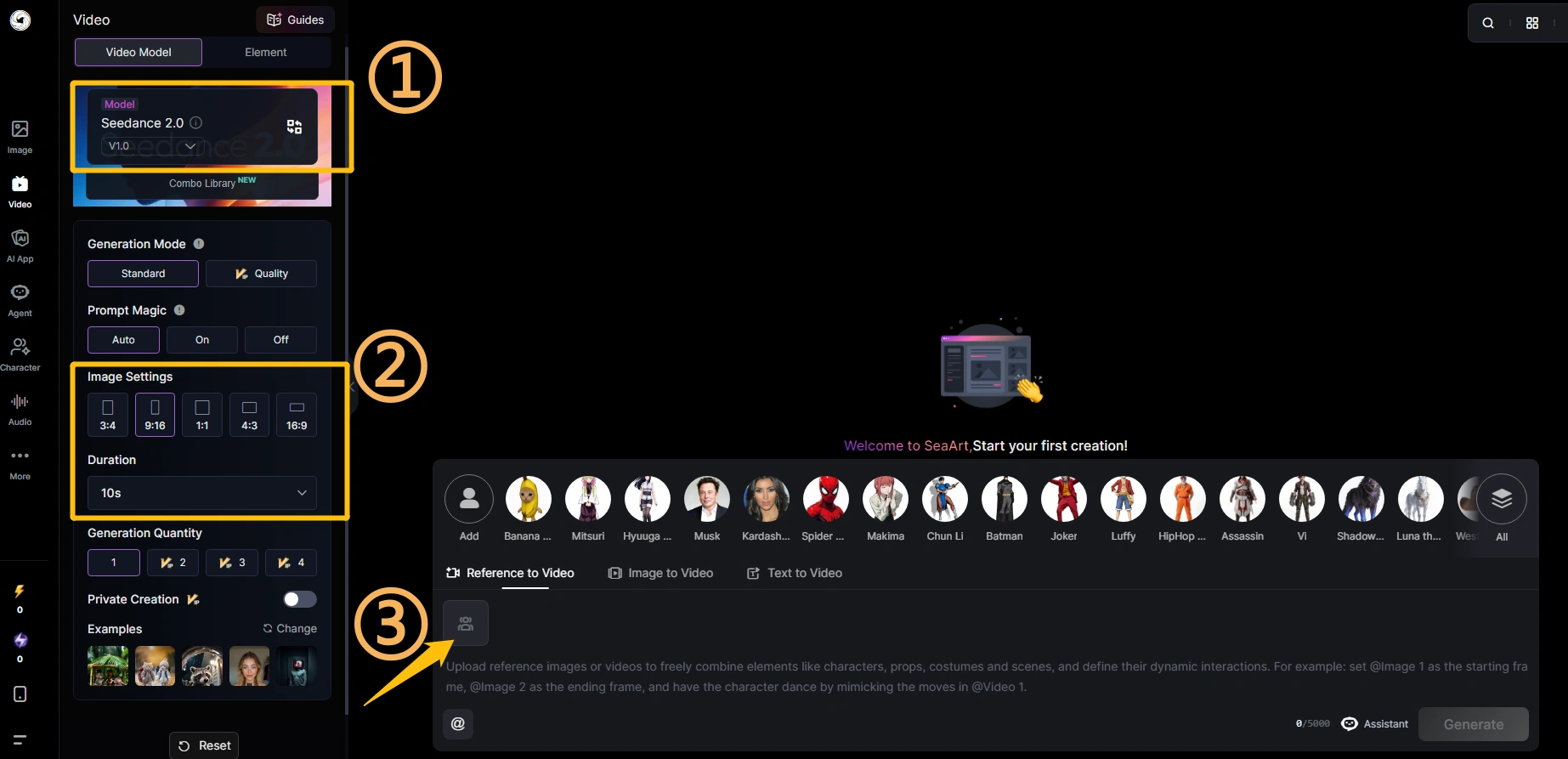

Two models are available: Seedance 2.0 and Seedance 2.0 Fast. Open either one and follow these three steps:

1. Choose your mode. The prompt area has three tabs: Reference to Video (upload images or videos and use @ tags to combine characters, props, and scenes), Image to Video (upload one image and describe the motion), or Text to Video (prompt only, no upload needed).

2. Upload your assets and set parameters. In Reference to Video mode, each uploaded file gets a tag automatically (@Image 1, @Image 2, @Video 1) for use in your prompt. Set aspect ratio (3:4, 9:16, 1:1, 4:3, 16:9), duration (4s to 15s), and Generation Mode (Standard or Quality).

3. Write your prompt and generate. Describe motion, camera direction, mood, and audio cues. Reference your uploaded files by tag where relevant. Hit Generate and download from the result view once done.

How to Write Prompts for Seedance 2.0

Seedance 2.0's prompt adherence is noticeably stronger than earlier versions. It follows specific instructions rather than just the general tone. How you structure your prompt still matters. For 20+ copy-paste templates covering cinematic trailers, dance videos, action scenes, and product ads, see the Seedance 2.0 prompt guide.

When using multiple references, specify each file's role

Uploading a file without context forces the model to guess its purpose. Tell it exactly what each reference controls.

Show the dancer from image1 performing on stage. Use the choreography and movements shown in video1. Set the scene in the studio environment from image2. Sync each movement transition to the beat of audio1. Cinematic style, warm stage lighting.

Use timing blocks for multi-shot sequences

Label each segment with exact time ranges. The model reads these as shot boundaries and generates connected scenes with consistent continuity across cuts.

Strong rhythmic music-driven montage with fast cuts, slow motion, macro, and time-lapse. Audio: beats, bass pulses, breathing, ambient sounds only - no dialogue. 0-5s: Fast cuts montage - white flower blooming in extreme macro slow motion, green aurora dancing over lake with perfect reflection, ballet dancer en pointe mid-spin on black stage, butterfly wing scales shimmering in golden light, volcanic eruption with lava bursting upward. 5-10s: Fast cuts montage - humpback whale diving vertically underwater with sunlight rays piercing through deep blue ocean, Milky Way galaxy mirrored perfectly on still lake with lone figure at horizon, golden hour wheat swaying in backlight with warm bokeh, then cut to black screen with center frame minimalist white text: SEEDANCE 2.0

Use cinematography language for camera control

Seedance 2.0 responds accurately to standard film terminology. These all work reliably:

| Term | What it does |

|---|---|

| Push-in | Camera moves forward toward subject |

| Pull-out / pull-back | Camera moves backward, reveals wider context |

| Orbit shot | Camera circles around a fixed subject |

| Tracking shot | Camera follows subject in motion |

| Dutch angle | Camera tilted on its axis, creates tension |

| Shallow depth of field | Subject sharp, background blurred |

| Handheld feel | Slight camera movement, less polished, more grounded |

Seedance 2.0: Best Prompt Examples

These are actual prompts that demonstrate what Seedance 2.0 executes reliably. Each targets a specific capability.

1. Sci-Fi Character Showcase

Use @image1 as facial reference. Keep face completely consistent - do not alter features or expression. Style: BLACK SUN sci-fi aesthetic, dark photography, biomechanical design, shallow depth of field. Character: Black biomechanical coat + black lining, ocean wave curls, exposed neck, feminine features, waist, high-level metallic sci-fi lighting, intricate technical patterns in fabric. Environment: Outdoor wasteland, gray-blue sky, windy. Camera: Medium fixed position, 30-degree side angle, slow upward pan and push-in, uncut continuous take, smooth slow-motion quality. Transformation: Deep red micro-light + dark neon glowing text, body shifts pose slightly. Effects: Dark bio-matter + black geometric glowing patterns, metallic texture, animated growth, subtle particles, red highlights. End: Hold final pose 3 seconds, cool texture finish.

What to watch for: face consistency across every frame from the reference image. Earlier models either drifted on facial features mid-clip or produced awkward pose transitions. This prompt is structured to prevent both.

2. Traditional Opera Performance

Use @image1 as the reference for the performer. Traditional stage, dim lighting. Camera starts with close-up, slowly rotates around her in slow motion, revealing costume details. She remains still, eyes locked on camera. Quick cut to side view, normal speed. She raises her arms in classic gesture, sleeves flowing gracefully. Camera slowly dollies in as she turns toward camera, ornaments sparkling under stage lights. Switch to extreme slow motion, wide shot. She extends both arms outward, sleeves billowing in the air, flowing silk brushing past the camera lens. Warm stage lighting, cinematic style.

This tests multi-speed transitions (slow motion to normal to extreme slow motion) and camera angle cuts. The model generates each speed shift at the right moment without manual editing.

3. Action Chase Sequence

Use @image1 as the opening frame. Camera follows a man in black clothing running at high speed, escaping urgently. A group of police officers chase behind him. Camera switches to side tracking shot, maintaining pace with the runner. In his panic, he crashes into a fruit stand on the roadside, scattering produce everywhere. He scrambles to his feet and continues running. Background: panicked crowd reactions and police siren sounds wailing.

This demonstrates automatic camera angle switching (front view to side tracking shot) and physics on scattered objects. Specify the camera switch in the prompt and the model executes it without a cut instruction.

Seedance 2.0 and the End of Manual Shot Stitching

The biggest problem with earlier AI video models wasn't quality. It was structure. You'd generate a 5-second clip, and it looked fine in isolation. But stringing together a scene with a beginning, middle, and end meant manually stitching dozens of clips, writing your own shot list, and syncing transitions by hand. The AI generated footage. You did everything else.

Seedance 2.0 flips that. Describe a narrative (a character, a situation, a sequence of events) and the model generates connected shots with consistent characters, matched lighting, and coherent transitions across the whole sequence. You used to be the AI's post-production editor. Now the AI is your director of execution. Your job is to tell the story clearly. The model figures out how to shoot it.

Audio works the same way. Dialogue, ambient sound, and sound effects generate alongside the video, aligned to the action, not added in a separate pass. For most short-form content, what comes out is close to a finished cut.

A couple of things still worth knowing: face references work best with clean, front-facing portraits; side profiles and low-resolution images tend to drift across frames. And for complex physics close-ups (liquid, cloth, detailed object interaction), the output is better than earlier models but still not production-ready without review. Test those shots with the Fast version first.

FAQ

Do I need a paid account to use Seedance 2.0 on SeaArt AI?

Seedance 2.0 runs on credits. For regular use, a paid plan gives you enough headroom to actually work with the model: more monthly credits and priority queuing. Worth it if you're generating more than a few test clips.

What is the Fast version on SeaArt AI? Is it a different model?

It runs on the same underlying model. SeaArt AI offers a Fast configuration that generates quicker and costs fewer credits. Quality is close but slightly lower on fine detail. Use it to test ideas and nail the structure, then switch to the standard version when you're ready for the final output.

Can I upload an existing video and edit specific parts of it?

Yes. Reference your video as @video1 in your prompt and describe what you want to change: replace a character, modify an action, or adjust a segment. The model attempts to keep everything else consistent. Results vary based on how much of the original you want to preserve, so test first with the Fast model.

How does Seedance 2.0's multi-shot coherence actually work?

ByteDance built native multimodal architecture rather than bolting audio and video processing together after training. The model also applies ROPE positional encoding, originally developed for maintaining context in long-text language models, to keep semantic continuity across camera cuts. This is what allows a single prompt to generate a 15-second multi-shot sequence where the character looks the same in shot 1 and shot 6.

How many files can I reference in one generation?

Up to 12 total: 9 images, 3 videos, and 3 audio tracks. Practically, keeping total references below 7 gives more predictable results. Beyond that, the model tends to deprioritize some inputs without obvious indication of which ones.

Can I use Seedance 2.0 for commercial projects?

Check SeaArt AI's current terms of service for commercial usage rights. License terms for AI-generated video output vary and can change with model updates. SeaArt AI's general commercial terms apply, but verify before publishing.

Conclusion

Think about what it took to shoot a commercial five years ago. Production studio. Lighting crew. Actors. Equipment rentals. A single rain delay could kill a week's schedule. That was the baseline, and it was only accessible to companies with real budgets.

The gap has closed fast. A strong idea and a clear prompt now gets you further than a mid-size production budget used to. The Seedance 2.0 review tested exactly that ceiling: fight choreography, cinematic sequences, and audio-synced cuts, all generated on the platform.

The model doesn't replace creative judgment. It executes it. What you choose to make, and how clearly you can describe it, still determines the outcome. That part hasn't changed.

The model is available on SeaArt AI now. Start creating.