Wan 2.7 Review: Overhyped or the Best AI Video Model of 2026?

Every creator conversation this quarter eventually circled back to one model: Wan 2.7.

It's become the single most requested review on SeaArt AI.

We didn't test it just because it was the loudest launch. We tested it because the real complaint kept surfacing: Most AI video models still can't hold a consistent character across two cuts. Is Wan 2.7 actually different?

So we ran 50+ full generations on the Plus Plan - testing everything from opening shots to character consistency, motion control, and cinematic language. Here's what actually held up.

Wan 2.7's real shift isn't flashier visuals. It's control.

You can finally tell the model exactly where the video starts, where it ends, and what the character must look like - and it actually listens.

What Is Wan 2.7?

Wan 2.7 is the newest addition to Alibaba's Wan video generation series, released publicly in late March 2026.

Earlier versions Wan 2.1 and Wan 2.2 were open-sourced under Apache 2.0 on GitHub, allowing instant ComfyUI integration and self-hosted runs. Whether 2.7 will follow the same path hasn't been officially confirmed yet.

It generates 1080p video up to 15 seconds with text-to-video, image-to-video, and native audio output built in.

One big question for teams planning local deployment: When will the open weights drop? Based on the consistent pattern of the Wan family, expect cloud launch first, then open weights within 4–8 weeks - pointing to mid-to-late Q2 2026. Watch the official Wan-Video GitHub for the exact date.

The core change from every earlier version: the number of control inputs the model accepts in a single generation call. Previous Wan models gave you a prompt and a starting image. Wan 2.7 gives you endpoint anchors, multi-reference grids, and instruction-based editing on top of that. It's not just a better generator - it's a different workflow.

| Quick Snapshot | Details |

|---|---|

| Best For | Filmmakers, YouTubers, agencies needing multi-shot video with audio |

| Output | 1080p, up to 15 seconds, native audio included |

| Starting Price | ~$10 (100 credits, never expire) |

| Free Trial | ~15 credits, no credit card required |

| Key Strengths | First/last frame, 9-grid I2V, subject+voice cloning, instruction editing |

| Main Weakness | Steeper learning curve; raw physics trails Sora 2 |

Why Wan 2.7 Works Differently: The Architecture

The Wan series runs on a Diffusion Transformer (DiT) architecture with a Full Attention mechanism. In practical terms, the model processes spatial and temporal relationships across the entire video sequence at once - not frame by frame. That is why character identity stays stable across 15 seconds without the drift you see in older diffusion-based video models.

Wan 2.7 keeps this same foundation but expands what the model can accept in a single call - nine images in a structured grid, a voice audio clip, defined start/end frames. More anchoring inputs per generation means fewer passes to reach a usable result.

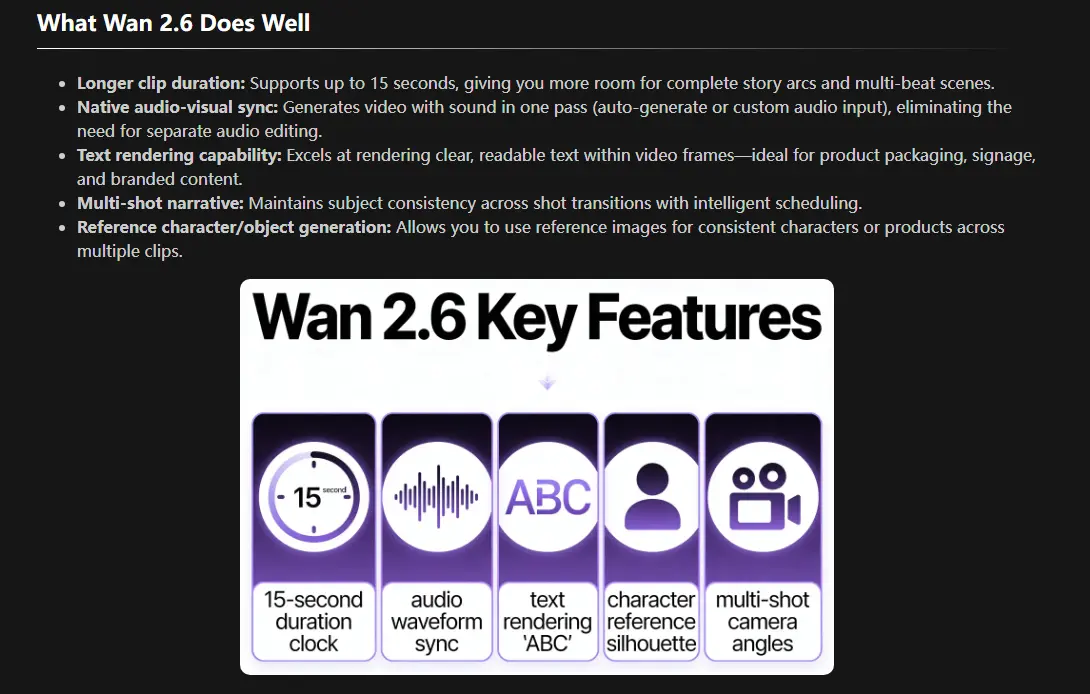

Five Core Improvements Over Wan 2.6

The jump from 2.6 to 2.7 is not purely a quality bump. Five things improved in ways that matter in real production work.

1. Sharper Visual Quality

Skin textures, fabric movement, and lighting gradients now hit commercial 1080p standards. Earlier Wan models occasionally produced that "video game CGI" quality on certain subjects - 2.7 addresses most of that.

2. Better Motion Coherence

Characters drift less. Physics behave more predictably. Fast movement holds up better. This was consistently the top complaint about Wan 2.5 and 2.6, and the improvement is noticeable.

3. Native Audio Built In

Background music, ambient sound, and character vocals sync with the scene from the start - not layered in afterward. For anyone who has manually matched audio to AI video frame by frame, this is the most immediately practical upgrade.

4. Stronger Style Consistency

The model holds cinematic realism, anime aesthetics, and illustrated styles more reliably across frames than 2.6 did - useful for content that needs a recognizable visual identity throughout.

Wan 2.6 key features overview. Source: Seedance 1.5 Pro vs Wan 2.6 Review - SeaArt AI

5. Improved Temporal Consistency

Fewer flickering faces, fewer mid-clip wardrobe changes, fewer scenes where the subject morphs slightly between cuts. Keeping a character looking the same from frame to frame is noticeably more reliable.

What's New in Wan 2.7 AI Video Model

These capabilities were not available in earlier Wan versions and cover the main reasons creators are switching from 2.6.

First and Last Frame Control

You give the model two images: what the video should look like at the start, and what it should look like at the end. The model builds everything in between - consistent subject identity, natural movement, and a coherent transition without additional passes.

This solves one of the most persistent frustrations with AI video: you never quite know where the scene will go. With endpoint anchors, you do.

Wan 2.1 had a separate "first and last frame" model checkpoint (Wan2.1-FLF2V-14B). In 2.7, this capability is integrated directly into the main model - so you're not switching between checkpoints.

Sample prompt for this mode:

Subject reference: @image | Start frame: rider on Bajaj scooter approaching from 30 feet | End frame: rider at arm's length, hand raised, speaking to cameraOne continuous shot. Camera holds static as the scooter weaves through a busy Mumbai street toward the lens. Colonial-era buildings line both sides. Crowd and traffic blur in background. As the rider reaches close-up distance, he raises his right hand and says: "Hello, my friend." Lip sync to dialogue. Native audio: street noise, engine hum, crowd, then clean dialogue.

9-Grid Image-to-Video

Upload a 3x3 arrangement of nine reference images. Wan 2.7 converts them into a single continuous video, treating each panel as a distinct scene with smooth transitions between them.

A few practical details on preparing grid inputs based on the model's architecture. Each of the nine panels should ideally share the same aspect ratio as your target video output - mixing portrait and landscape images within a single grid is likely to produce inconsistent framing across scenes. Standard image formats (JPG, PNG) are accepted. The grid reads left-to-right, top-to-bottom, so the sequence of your panels determines the sequence of scenes in the output. Minimum resolution per panel has not been officially specified, but submitting images below 512px on the short edge is likely to reduce output detail. The exact API parameter structure for grid inputs had not been formally published as of this writing - if you are building automated workflows around this feature, confirm the endpoint schema before shipping anything.

Subject and Voice Reference Cloning

Upload a reference image of a character and a short audio clip. Wan 2.7 replicates both the visual appearance and the vocal characteristics in the generated video.

The use cases are genuinely practical: brand mascots, YouTube creators who want to scale content without being on camera, marketing teams who need spokesperson videos at volume.

Wan 2.6 introduced a similar capability through its R2V model (a separate endpoint for character reference video). Wan 2.7 integrates this more tightly, making it part of the main generation flow rather than a separate tool.

Instruction-Based Editing

Upload an existing video clip and type what you want changed. "Change the background to night." "Swap the jacket to red." Wan 2.7 applies the edit while keeping the rest of the clip intact.

This is the feature with the most uncertainty around it. Temporal consistency on instruction edits - especially changes that touch moving elements like clothing - is where similar tools have historically degraded. Early results look promising, but give it a few weeks of community testing before building production workflows around this specific feature.

Video Recreation

Provide a reference video. Describe what you want changed - the character, the style, the environment. Wan 2.7 preserves the original motion structure and camera path while rebuilding the visual layer on top.

Useful for adapting trending video formats with your own brand assets, or converting live footage into an animation style. This feature appeared in several third-party Wan 2.7 summaries, but official Alibaba documentation had not fully confirmed its behavior as of publication. Treat it as promising but exploratory for now.

Wan 2.7 Video Showcases

These three scenarios show where Wan 2.7's new features perform well - and where the edge cases are worth knowing before you commit to a workflow.

Scenario 1: Multi-Shot Consistency Using Subject Reference

What to expect: Vehicle identity locked across three different camera angles and lighting conditions - same truck model, paint, chrome, and proportions - without re-uploading the reference image between shots.

Example prompt structureSubject reference: @image. Maintain consistent truck model, color, and chrome detail across all shots. [00:00–00:05] Wide tracking shot - a vintage Ford pickup tears across a desert road at dusk, explosions erupting behind it, missile trails streaking across a burnt-orange sky, dust billowing from the rear tires. [00:05–00:10] Cut to - front-facing shot, truck driving straight toward camera, headlights on, fireball rising in the background, debris scattering across frame. [00:10–00:15] Final shot - low-angle side view, truck fishtailing on gravel, smoke and fire filling the horizon, camera holds as the truck clears frame. Native audio: engine roar, explosion rumble, gravel scatter.

What to watch for: Vehicle shape and paint tone stay consistent across camera angle changes when the reference image is shot in even, natural light. The model handles the side-to-front cut without distorting the truck's proportions. The known edge case: heavy motion blur on the vehicle body during fast action can cause the model to simplify chrome and grille detail. Keeping a motion blur note in the prompt - "sharp on truck body, blur on background only" - preserves the key identifying details across all three shots.

Scenario 2: One-Shot Character Dialogue with Subject and Voice Reference

What to expect: A single uncut shot where the character approaches the camera, delivers a spoken line with synced audio, and identity stays locked throughout.

Subject reference: @image | Voice reference: @audioSingle continuous shot. A woman stands at the edge of a rain-soaked rooftop at night, her back to camera. City neon reflects in every puddle across the concrete behind her. Camera pushes slowly toward her face over 8 seconds. At 00:06, she turns - looks directly into the lens - and says: "You were never supposed to find me here." Lip sync to dialogue. Native audio: rain on concrete, distant traffic hum, then clean isolated dialogue on the final line. 10 seconds, 1080p.

What to watch for: Identity lock holds well when the reference image is front-facing and evenly lit. The failure point is shadows - heavy facial shadow in the reference causes detail loss during the turn. A clean, flat-lit reference resolves this.

On audio: Lip sync is functional but not frame-perfect. Short lines at natural pace work well; fast delivery above ~150 WPM causes drift. Multiple simultaneous speakers collapse into one dominant voice. Background music layered over dialogue is the hardest case - the model tends to let music overwhelm speech, so plan for a manual ducking pass in post.

Scenario 3: Native Audio Sync on a High-Motion Action Sequence

What to expect: Native audio generation across fast-cut action, not just ambient background sound.

Example prompt structureA motorcycle tears through a highway tunnel at night, sparks trailing off the exhaust. [00:00–00:04] Rear tracking shot, tunnel lights strobing overhead, engine roar rising. [00:04–00:08] Side orbit shot as the bike leans into a sharp curve, tires screeching against wet concrete. [00:08–00:12] Low front-angle shot, headlight cutting through the dark, wind and mechanical sound peaking. Audio: motorcycle engine roar, tire screeching, tunnel echo, wind rush synced to speed changes.

What to watch for: Engine roar tracks well against visual speed, and tunnel echo is added automatically without needing to be prompted. The known weak point: audio on cornering shots can run slightly behind the visual lean - not noticeable on mobile, but visible on a desktop timeline. On complex multi-sound scenes, native audio gets you 80-90% of the way there. A quick manual sync pass is still worth building into delivery workflows.

Pricing

Wan 2.7 uses a credits-based pricing model. Commercial use is included on all paid tiers.

| Plan | Cost | Credits | Approx. Cost per 5-Second Video |

|---|---|---|---|

| Free Trial | $0 | ~15 credits | Free |

| Starter | ~$10 | 100 credits | $0.40-0.60 |

| Basic/Plus | ~$30-$50 | 300-600 credits | $0.40-0.60 |

| Pro | Varies | Higher volume | Lower per-video cost |

Credits do not expire. Most AI video tools reset unused capacity at the end of each billing cycle - with Wan 2.7, what you buy stays available. For sporadic or project-based usage, that structure is more practical than a monthly subscription.

What Does a Real Project Actually Cost?

Per-video pricing sounds cheap until you run a real project. A 60-second brand video typically breaks down into 4 to 6 distinct clips at 10 to 15 seconds each. At roughly 12–18 credits per clip, a single clean pass runs 60 to 100 credits. Factor in 2 to 3 generation attempts per clip to get a keeper, and the real budget per finished 60-second video sits at 75 to 150 credits.

| Project Type | Clips Needed | Est. Credits (incl. retries) | Approx. Cost on Plus Plan |

|---|---|---|---|

| Single 60-sec brand video | 4–6 clips | 75–150 credits | ~$6–13 |

| 4 videos/month | 16–24 clips | 300–600 credits | ~$25–50 |

| Agency: 10 videos/month | 40–60 clips | 750–1,500 credits | ~$63–125 |

Does Wan 2.7 Actually Beat Sora 2 and Kling?

Wan 2.7 vs Sora 2 vs Kling 2.6 vs Veo 3.1 - Quick Head-to-Head

| Feature | Wan 2.7 | Sora 2 | Kling 2.6 | Veo 3.1 |

|---|---|---|---|---|

| Resolution | 1080p | 1080p | 1080p | 1080p |

| Max Duration | 15 sec | Up to 20 sec | 10 sec | 8 sec |

| Native Audio | Yes | Limited | Yes | Yes |

| First/Last Frame Control | Yes | No | Yes (via reference video) | No |

| 9-Grid Multi-Scene Input | Yes | No | No | No |

| Subject + Voice Cloning | Yes | No | No | No |

| Instruction-Based Editing | Yes | Yes | No | Limited |

| Open Source (planned) | Yes | No | No | No |

| Credits Expire | Never | Monthly | Monthly | Monthly |

| Starting Price | ~$10 (non-expiring) | $20/mo | $10/mo | Varies |

| Physics / Motion Realism | Good | Excellent | Very Good | Good |

| Ease of Use | Steeper learning curve | Medium | Highest | Medium |

Where Wan 2.7 wins: precise shot steering, character consistency across shots, native audio output, and a pricing structure that doesn't punish irregular usage.

Where competitors have an edge: Sora 2 handles rapid physical dynamics and fast-action physics better - if your content needs that kind of raw realism, the gap is real. Kling is more approachable if you want reliable one-click generation without structured prompting. If you want to compare them side by side, SeaArt AI's model library lets you access multiple AI video models without switching platforms.

Is Wan 2.7 Right for Your Workflow?

It's a strong fit for filmmakers, YouTubers, marketers, and agency creators who need multi-shot videos with audio and precise control. The feature set matches how professional content workflows actually operate - you have a storyboard, you have a character, you have a defined start and end for each scene. Wan 2.7 works with that structure rather than against it.

Skip it if you want dead-simple one-click video from a single prompt. For that use case, Kling's standard workflow is still more forgiving and faster to get decent results from. Also skip it if you're primarily making content where raw physics realism matters - think sports, fast action, or highly dynamic camera work. Sora 2 still has a slight lead there.

The learning curve is not a small thing. Expect to spend real time getting good results from the 9-grid and subject reference features before they feel natural. This won't work for everyone, and that's worth knowing before committing to it.

Conclusion

The short answer is no - not in the way the headlines suggest. Wan 2.7 is a serious tool, but it doesn't eliminate the need for people who know what a good shot looks like. What it does do is compress the distance between an idea and a watchable clip. A solo creator with a clear storyboard can now produce multi-shot sequences with consistent characters and synced audio without a crew. That's a real shift, even if "replacing editors" is an exaggeration.

What it cannot do: replace judgment. The model follows your inputs - but if your prompts are vague or your reference images are weak, the output reflects that. The ceiling on quality is high, but so is the floor on what you need to bring to the table. For developers and self-hosted workflows, the open weights release will be the real deciding factor. If you already use SeaArt AI to generate and refine reference images, pairing that with Wan 2.7’s image-to-video and subject-reference features is a genuinely effective workflow. For creators willing to learn the structured prompting approach, Wan 2.7 is worth the investment. For everyone else, the simpler tools still exist.

The fastest way to know if it fits your workflow: run one first/last frame test on a simple scene using an AI video generator. Most creators have their answer within the first session.